Introducing Paladin: Access Decisions at Machine Speed, With Human Judgment

Introducing Paladin: Access Decisions at Machine Speed, With Human Judgment

Introducing Paladin: Access Decisions at Machine Speed, With Human Judgment

Paladin has the context of the CISO and executes like a security engineer.

Paladin has the context of the CISO and executes like a security engineer.

Every access reviewer is making the same impossible bet. Approve fast and risk a breach. Investigate thoroughly and become a bottleneck. Across half a million access requests on the Opal platform last year, we saw the consequences of this tradeoff play out at scale: median approval times stretching to 15 minutes, latencies exceeding 24 hours at major enterprises, and 43.5% of all granted access sitting unused for more than 90 days. Reviewers are spending hours making decisions that produce entitlements nobody uses.

Today we're making Paladin, an AI-powered access evaluation engine that eliminates this false choice, available in Early Access. It lives alongside OpalScript, a policy-as-code language, and OpalQuery, a natural-language access query environment. Together, they represent Opal's vision for what access management needs to become: a system that can see your access posture, encode your organization's policies, and enforce them autonomously.

The Problem Is Context, Not Effort

The access review bottleneck isn't laziness. It's information asymmetry. When a reviewer sees "Marcus Chen requests ADMIN access to production-db-west-2 for 48 hours," they face a wall of unanswered questions. Does Marcus normally access production databases? Is there an active incident justifying the urgency? Does a 48-hour window violate the PCI duration policy on this resource? Has his team historically needed this kind of access?

Finding those answers means toggling between Okta, PagerDuty, Opal's access logs, a data classification spreadsheet, and whatever Slack channel the on-call team uses. The cost of doing that investigation for every request is prohibitive. So reviewers take shortcuts. They approve based on gut feel, they rubber-stamp because the requester is a familiar name, or they slow-walk everything into a multi-day queue because they can't distinguish the urgent from the routine.

Our data spells out this story. At one end of the spectrum are organizations where 90% of requests clear in under 5 minutes. At the other end are organizations where 10% of requests take more than 92 hours. The gap isn't capability. It's whether the reviewer has sufficient context to decide.

An AI Reviewer or an AI Advisor: You Decide

Paladin was designed to serve a fully-capable reviewer entity in Opal's approval chain — a service user that can be assigned to any approval stage, just like a human reviewer. When a request arrives, Paladin investigates it the way a senior security engineer would, then acts on what it finds. On the other hand, if you’d prefer a more arm’s length approach, you can choose to use Paladin in a familiar context: a sidebar in Risk Center.

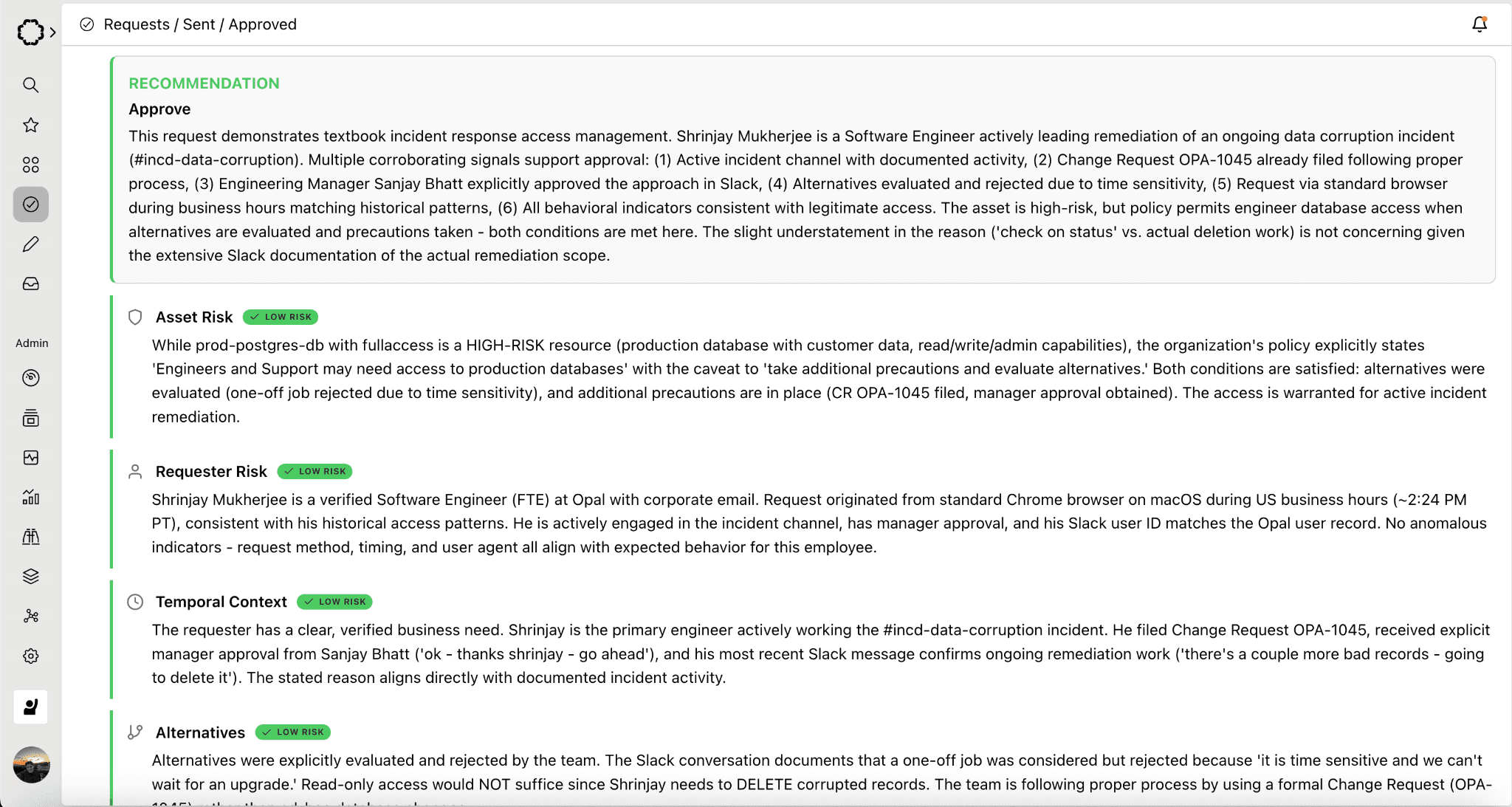

Here's what that looks like in practice:

Expanded:

The entire exchange — investigation, escalation, re-evaluation, approval — happens through the standard request activity feed. Every piece of reasoning is captured in the audit trail. No new infrastructure required.

What Paladin Evaluates

In its current V1 implementation, Paladin assesses:

Justification quality. Is the stated reason substantive, or is it "I need it"? Vague justifications are the single most common trigger for escalation.

Access history. Has the requester accessed this resource before? Have they made prior requests? First-time requests to sensitive resources get higher scrutiny.

Ticket correlation. If the justification references a ticket — Linear, Jira, or others — Paladin looks it up. It verifies the ticket exists, is active, and matches the requested resource. A valid ticket dramatically increases confidence.

Resource sensitivity inference. Paladin infers sensitivity from resource naming patterns and metadata. A resource called "prod-payments-db" triggers different scrutiny than "dev-sandbox-test."

Identity context. Paladin resolves the requester's role and seniority. An intern requesting admin access to a production database is a different risk profile than a senior platform engineer.

When Paladin has sufficient confidence, it approves directly. When it doesn't, it escalates with a clear explanation, not a vague "needs review" flag. Paladin explicitly delivers a specific accounting of what's missing and what will resolve it.

The Long-Term Vision

The Paladin roadmap extends into structured multi-signal evaluation with explicit risk scores, peer analysis that compares requests against team norms, usage pattern analysis that checks whether the requester's behavior matches their justification, policy engine integration that catches duration violations and escalation requirements automatically, and eventually, continuous posture monitoring that extends beyond new requests to proactively surface risk in existing access — orphaned accounts, privilege drift, standing access that hasn't been used in months.

OpalScript: Your Policies, Executable

Paladin handles the AI-driven evaluation. But many access decisions don't need AI — they need your organization's specific rules, consistently applied. That's OpalScript.

OpalScript is a scripting language built on Starlark — the same deterministic, sandboxed language Google developed for Bazel — that lets you encode conditional access logic directly inside Opal. No external webhooks, no infrastructure to deploy, no network calls to maintain.

request = context.get_request() for resource in request.requested_resources: if resource.resource_type == "AWS_IAM_ROLE": if "prod" in resource.resource_name.lower(): actions.comment("Production AWS access requires manual review") break else: actions.approve("Auto-approved: non-production access")

That script runs every time an access request is routed to the service user it's attached to. It checks whether any requested resource is a production AWS IAM role, flags those for human review, and auto-approves everything else. No webhook endpoint, no Lambda function, no deployment pipeline.

OpalScript includes an AI assistant that generates and modifies scripts from natural language. Tell it "switch from AWS IAM roles to GCP" and it surgically updates the resource type check and the comment string while preserving all other logic. Scripts are version-controlled within Opal, execution history is tracked with timestamps and durations, and every automated decision flows through the same audit trail as human decisions.

The real power is the combination with Paladin. OpalScript handles the deterministic, rule-based decisions — environment gating, duration policies, custom field validation, tiered auto-approval. Paladin handles the judgment calls that require contextual investigation. Together, they cover the full spectrum from "this should obviously be approved" to "this needs someone to think carefully."

OpalQuery: Ask Your Access Graph Anything

Paladin enforces. OpalScript encodes. OpalQuery sees.

Every security team has the same recurring conversation with auditors, VPs, and incident responders: "Show me everyone with access to PCI-scoped systems." "Who on the platform team has admin access to production?" "Does this compromised user have access to anything else sensitive?" The answer is always the same: give us a few hours.

OpalQuery changes the economics of that question. It's an AI-powered query environment embedded in Opal where you describe what you're looking for in plain English and get structured results in seconds. Type "show me all users with access to Engineering Production and AdministratorAccess" and OpalQuery translates your intent into composable, editable structured filters — resolving "Engineering Production" against your actual resource catalog, not a fuzzy guess. You can inspect exactly what the AI built, adjust it, and run it. No black box.

The results are exportable as timestamped archives ready for audit evidence. Queries can be saved, shared across your organization, and re-run each audit cycle. The query you built for SOC 2 evidence last quarter is one click away when the auditor comes back.

This matters for Paladin's trajectory. As Paladin evolves toward continuous posture monitoring — surfacing risk in existing access, not just new requests — it will surface findings as pre-built OpalQuery queries. Automated risk detection connected directly to the investigative tools to act on it.

Why Now: The Agentic Inflection Point

The current state of access management is already strained. But the urgency isn't just about improving the status quo. It's about what's coming.

AI agents are beginning to request resource access autonomously — assembling permissions dynamically for each task, operating at compute speed, and generating request volumes that could reach 500 to 1000 times today's levels. An agent executing a multi-step workflow can't wait 15 minutes for an approval, let alone 24 hours. It blocks, retries, or fails.

At a mere 10x increase in request volume, even a moderately sized organization would need dozens of full-time reviewers doing nothing but approving access requests but we expect agent volumes will be an order of magnitude larger so it’s simply impossible to handle the coming tidal wave with manual reviewers. That's not a workflow problem — it's an architectural impossibility. And every completed agent task that doesn't have its access automatically revoked leaves behind a stale entitlement, compounding the 43.5% stale access rate that already exists.

The organizations that will navigate this transition are the ones that can grant fast, revoke automatically, and shift human review from individual requests to policies. That's exactly the architecture Paladin, OpalScript, and OpalQuery are designed for:

Paladin evaluates requests at machine speed with the investigative depth of a senior security engineer, approving what's safe and escalating what isn't.

OpalScript encodes your organization's specific rules as executable, auditable code — the policy layer that lets automation make decisions you trust.

OpalQuery gives every analyst instant visibility into who has access to what, so you can see the consequences of your policies and act on what you find.

Grant fast. Encode policy. Enforce continuously. See everything.

Getting Started

Paladin is available in Early Access. Configure it as a reviewer in any approval stage, and it will begin evaluating requests immediately — posting its reasoning on the activity feed and escalating when it can't confidently decide.

OpalScript is live for service user automations. Write scripts that run inside Opal with native access to your access graph, or use the AI assistant to generate them from natural language. View the documentation →

OpalQuery is available in Beta for Super and Read-Only Admins. Find it in the Opal sidebar, type what you're looking for, and export your results before the auditor finishes their coffee. View the documentation →

Want to see Paladin, OpalScript, and OpalQuery in action? Schedule a demo today.

Paladin has the context of the CISO and executes like a security engineer.

Every access reviewer is making the same impossible bet. Approve fast and risk a breach. Investigate thoroughly and become a bottleneck. Across half a million access requests on the Opal platform last year, we saw the consequences of this tradeoff play out at scale: median approval times stretching to 15 minutes, latencies exceeding 24 hours at major enterprises, and 43.5% of all granted access sitting unused for more than 90 days. Reviewers are spending hours making decisions that produce entitlements nobody uses.

Today we're making Paladin, an AI-powered access evaluation engine that eliminates this false choice, available in Early Access. It lives alongside OpalScript, a policy-as-code language, and OpalQuery, a natural-language access query environment. Together, they represent Opal's vision for what access management needs to become: a system that can see your access posture, encode your organization's policies, and enforce them autonomously.

The Problem Is Context, Not Effort

The access review bottleneck isn't laziness. It's information asymmetry. When a reviewer sees "Marcus Chen requests ADMIN access to production-db-west-2 for 48 hours," they face a wall of unanswered questions. Does Marcus normally access production databases? Is there an active incident justifying the urgency? Does a 48-hour window violate the PCI duration policy on this resource? Has his team historically needed this kind of access?

Finding those answers means toggling between Okta, PagerDuty, Opal's access logs, a data classification spreadsheet, and whatever Slack channel the on-call team uses. The cost of doing that investigation for every request is prohibitive. So reviewers take shortcuts. They approve based on gut feel, they rubber-stamp because the requester is a familiar name, or they slow-walk everything into a multi-day queue because they can't distinguish the urgent from the routine.

Our data spells out this story. At one end of the spectrum are organizations where 90% of requests clear in under 5 minutes. At the other end are organizations where 10% of requests take more than 92 hours. The gap isn't capability. It's whether the reviewer has sufficient context to decide.

An AI Reviewer or an AI Advisor: You Decide

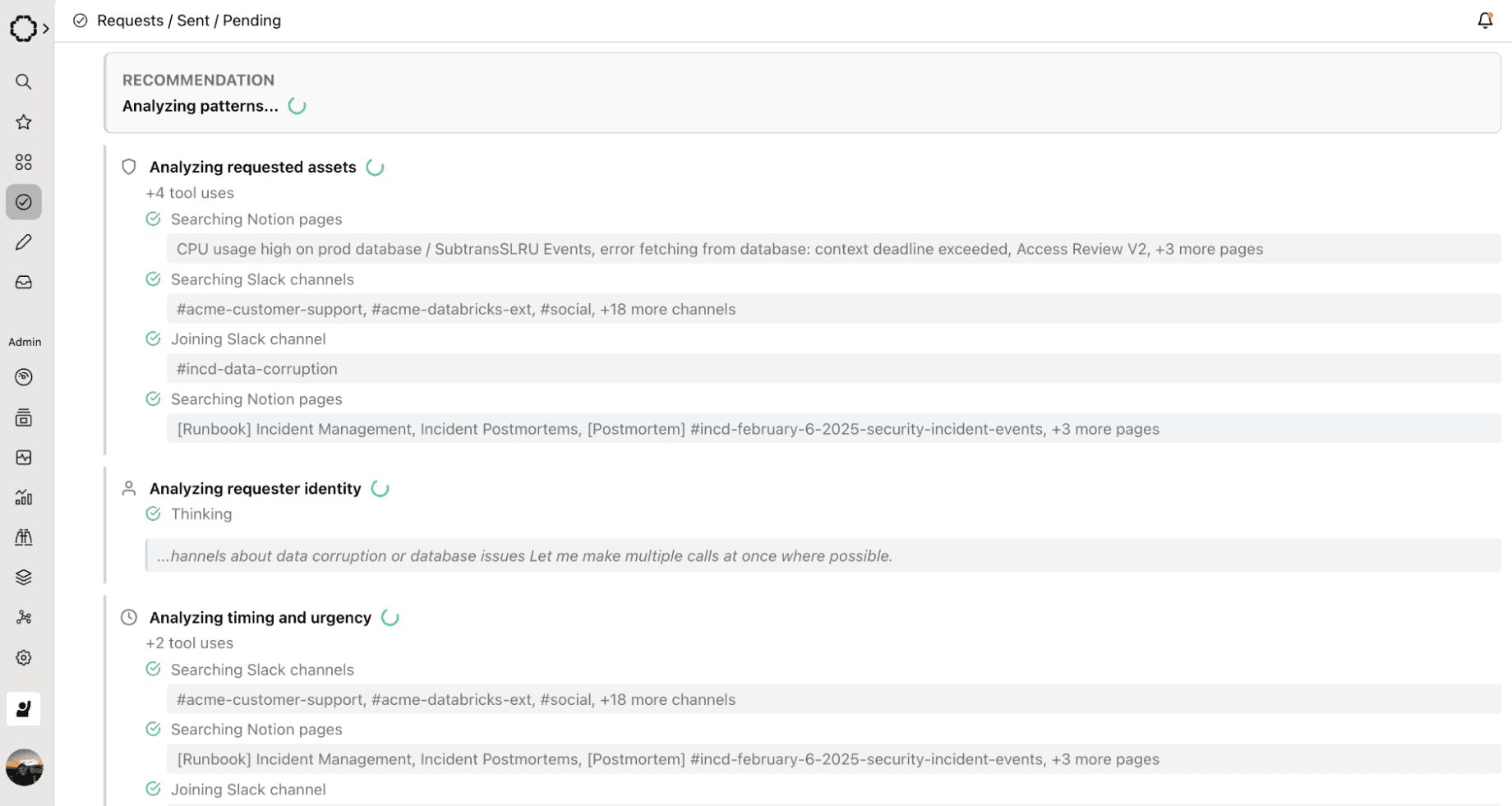

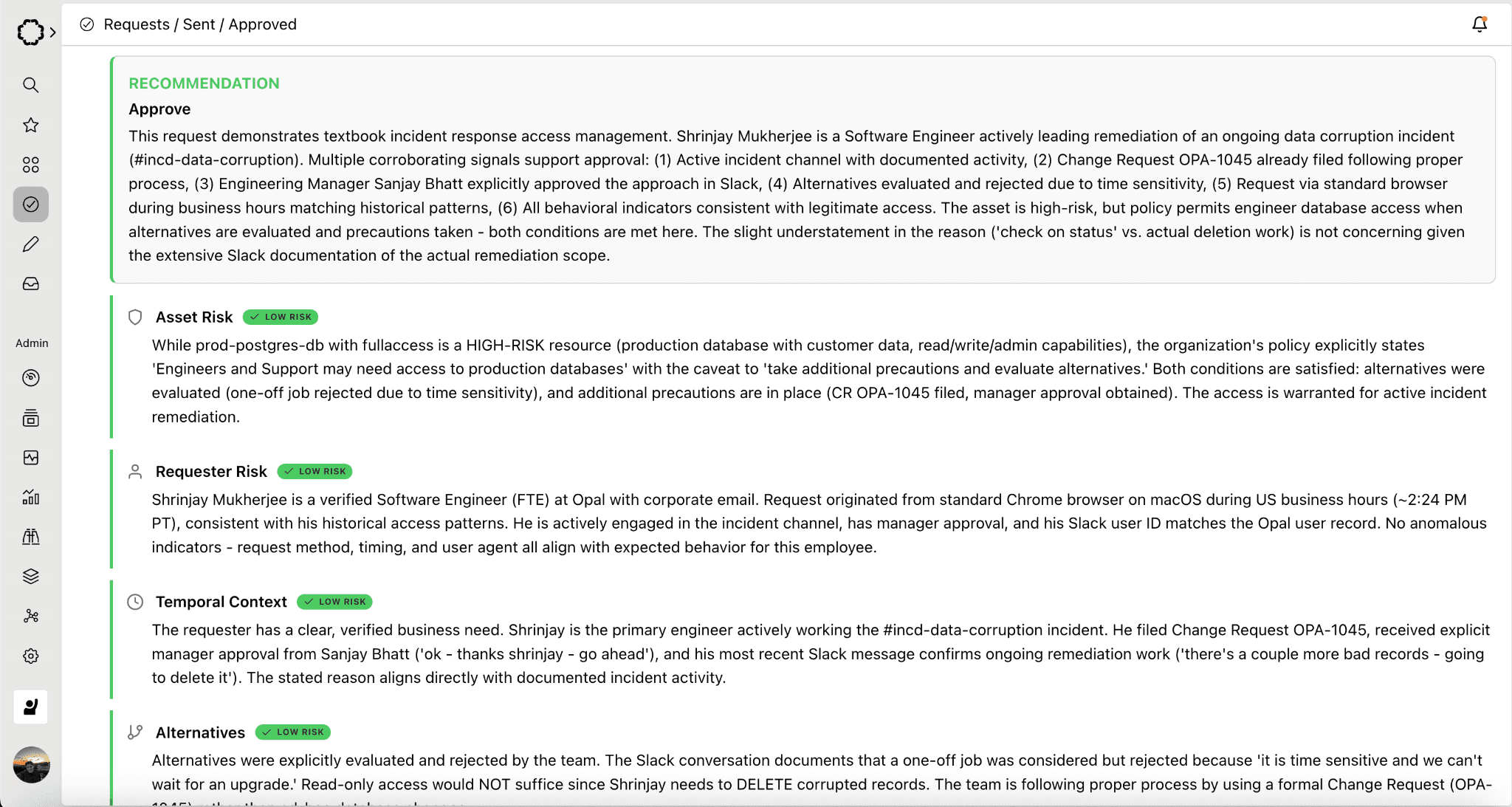

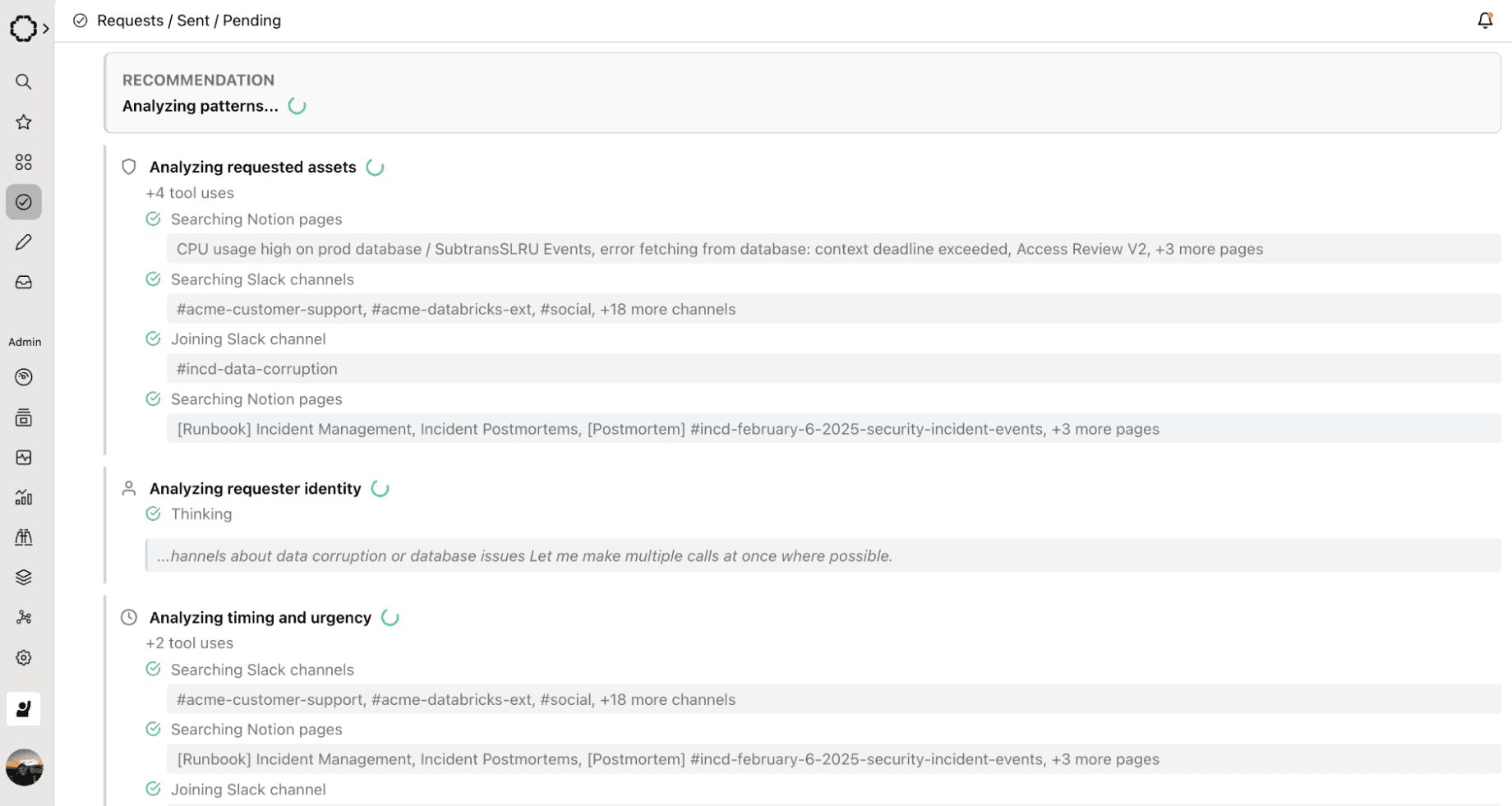

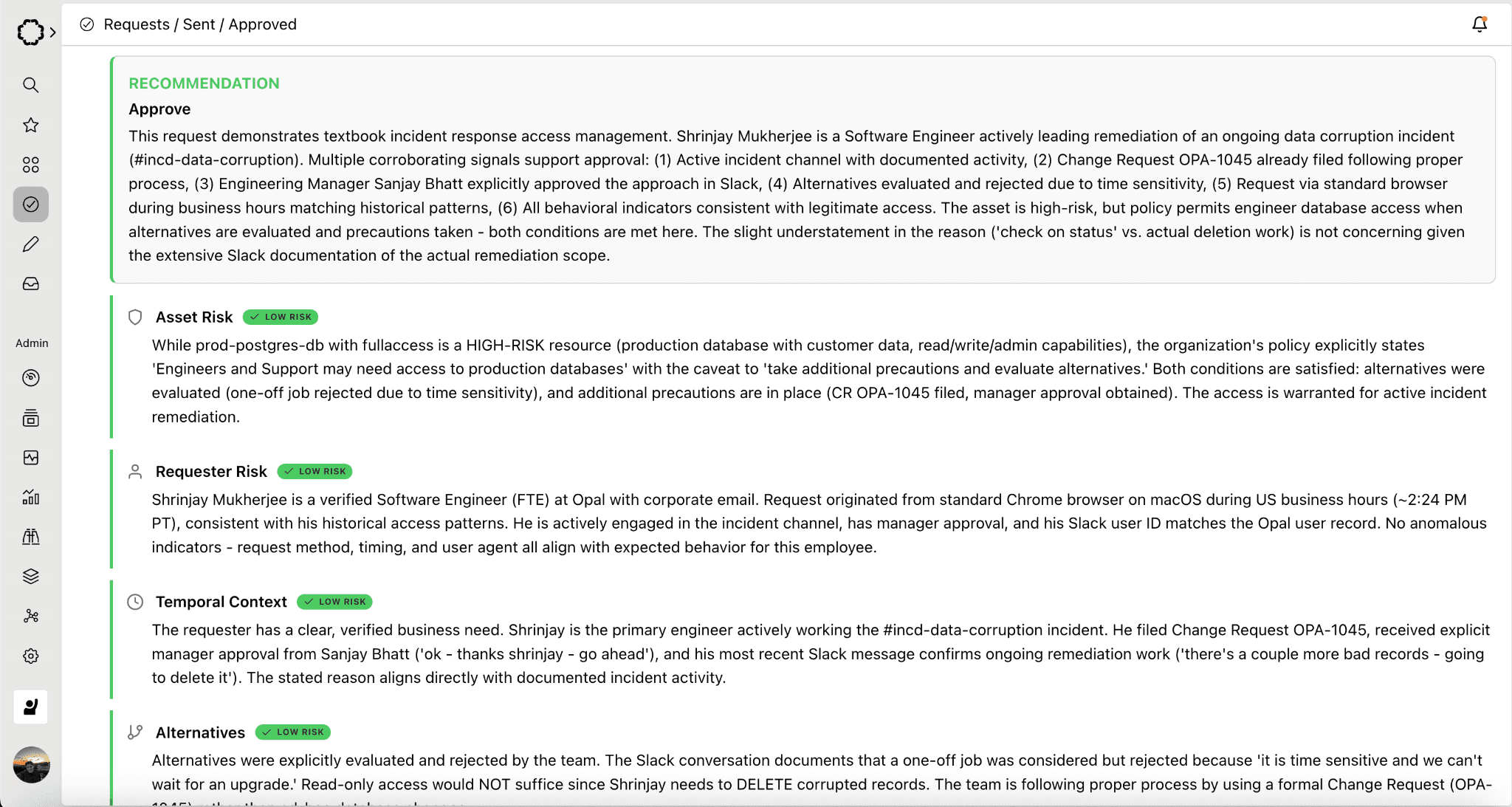

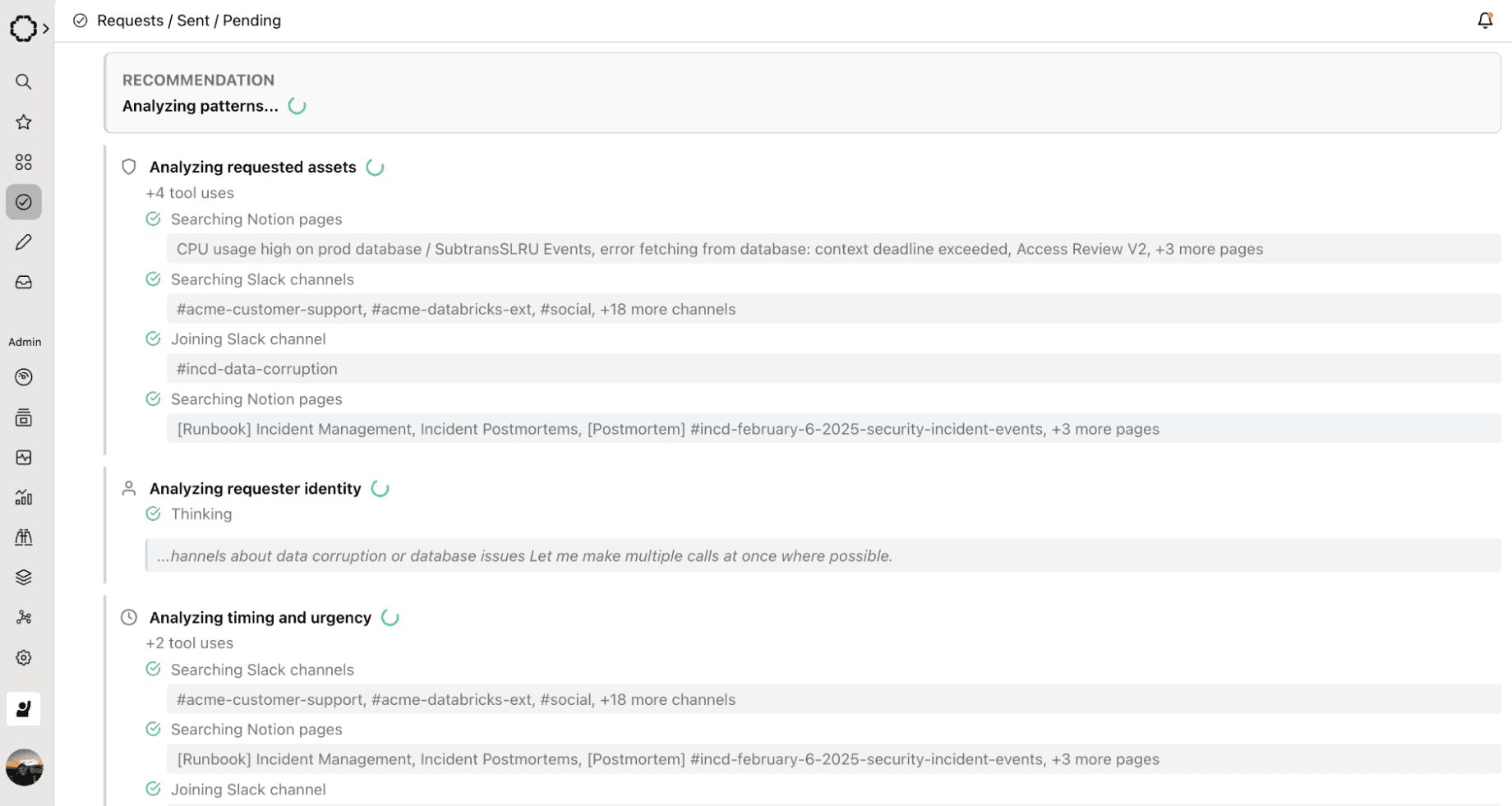

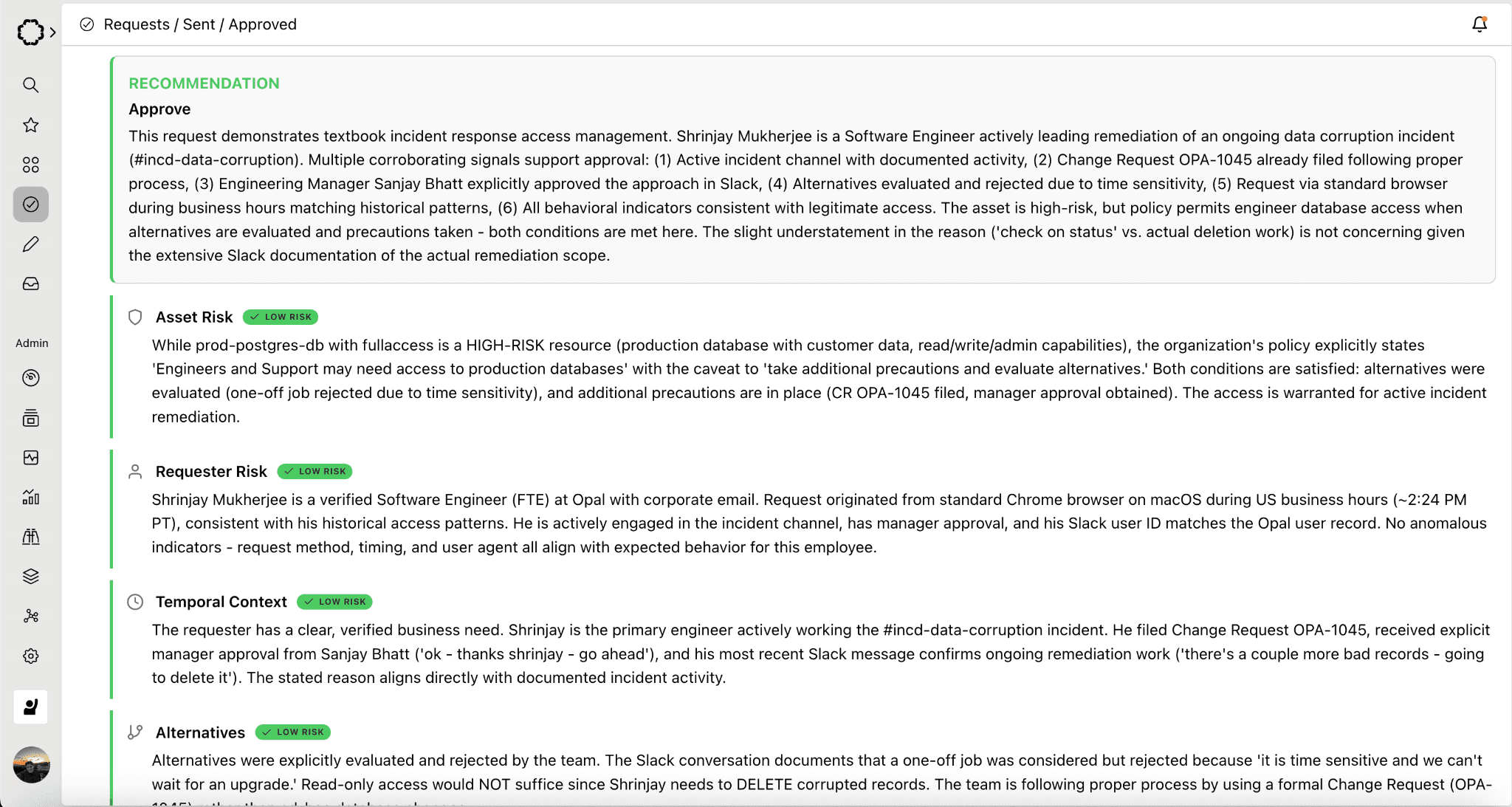

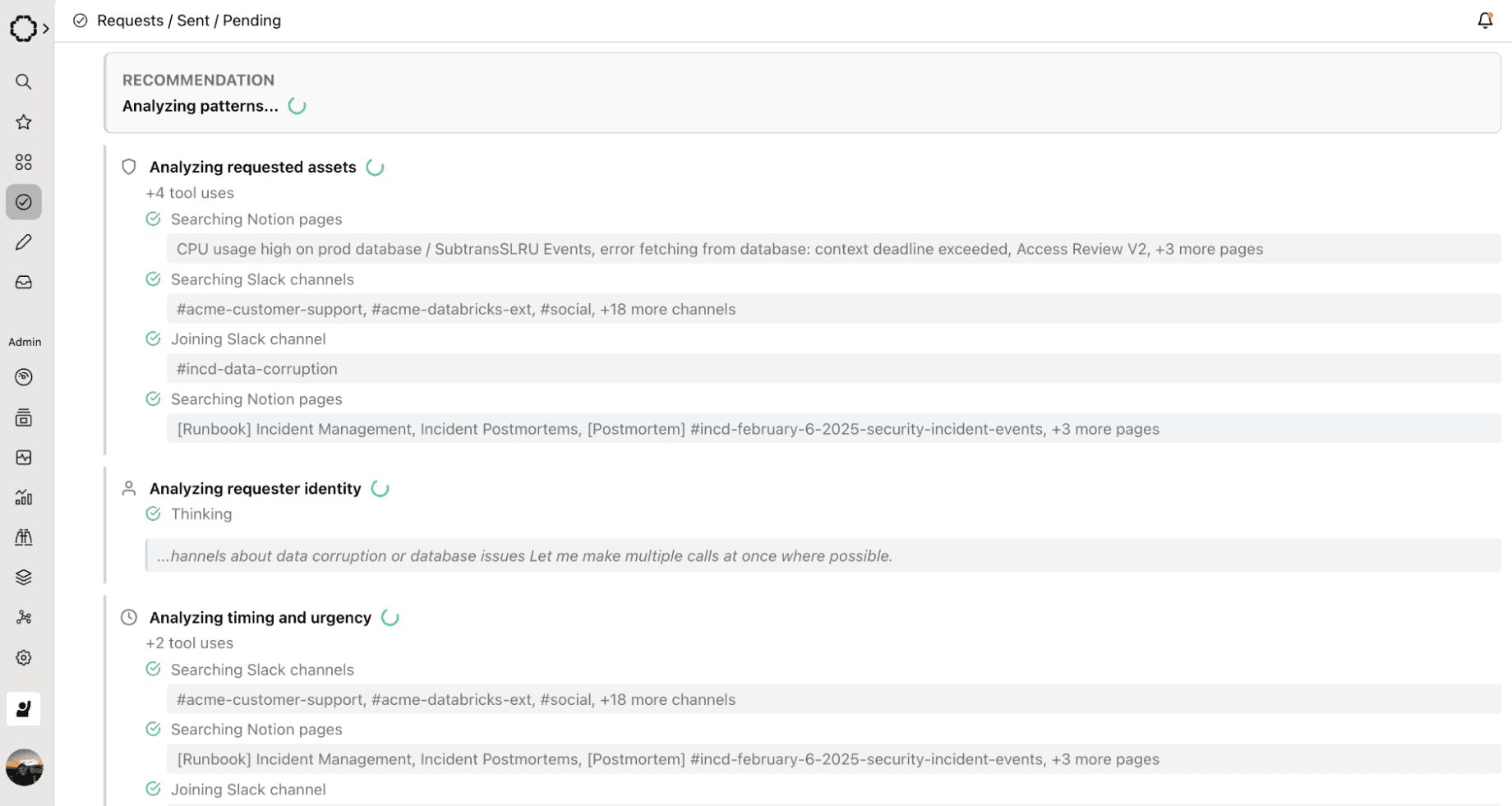

Paladin was designed to serve a fully-capable reviewer entity in Opal's approval chain — a service user that can be assigned to any approval stage, just like a human reviewer. When a request arrives, Paladin investigates it the way a senior security engineer would, then acts on what it finds. On the other hand, if you’d prefer a more arm’s length approach, you can choose to use Paladin in a familiar context: a sidebar in Risk Center.

Here's what that looks like in practice:

Expanded:

The entire exchange — investigation, escalation, re-evaluation, approval — happens through the standard request activity feed. Every piece of reasoning is captured in the audit trail. No new infrastructure required.

What Paladin Evaluates

In its current V1 implementation, Paladin assesses:

Justification quality. Is the stated reason substantive, or is it "I need it"? Vague justifications are the single most common trigger for escalation.

Access history. Has the requester accessed this resource before? Have they made prior requests? First-time requests to sensitive resources get higher scrutiny.

Ticket correlation. If the justification references a ticket — Linear, Jira, or others — Paladin looks it up. It verifies the ticket exists, is active, and matches the requested resource. A valid ticket dramatically increases confidence.

Resource sensitivity inference. Paladin infers sensitivity from resource naming patterns and metadata. A resource called "prod-payments-db" triggers different scrutiny than "dev-sandbox-test."

Identity context. Paladin resolves the requester's role and seniority. An intern requesting admin access to a production database is a different risk profile than a senior platform engineer.

When Paladin has sufficient confidence, it approves directly. When it doesn't, it escalates with a clear explanation, not a vague "needs review" flag. Paladin explicitly delivers a specific accounting of what's missing and what will resolve it.

The Long-Term Vision

The Paladin roadmap extends into structured multi-signal evaluation with explicit risk scores, peer analysis that compares requests against team norms, usage pattern analysis that checks whether the requester's behavior matches their justification, policy engine integration that catches duration violations and escalation requirements automatically, and eventually, continuous posture monitoring that extends beyond new requests to proactively surface risk in existing access — orphaned accounts, privilege drift, standing access that hasn't been used in months.

OpalScript: Your Policies, Executable

Paladin handles the AI-driven evaluation. But many access decisions don't need AI — they need your organization's specific rules, consistently applied. That's OpalScript.

OpalScript is a scripting language built on Starlark — the same deterministic, sandboxed language Google developed for Bazel — that lets you encode conditional access logic directly inside Opal. No external webhooks, no infrastructure to deploy, no network calls to maintain.

request = context.get_request() for resource in request.requested_resources: if resource.resource_type == "AWS_IAM_ROLE": if "prod" in resource.resource_name.lower(): actions.comment("Production AWS access requires manual review") break else: actions.approve("Auto-approved: non-production access")

That script runs every time an access request is routed to the service user it's attached to. It checks whether any requested resource is a production AWS IAM role, flags those for human review, and auto-approves everything else. No webhook endpoint, no Lambda function, no deployment pipeline.

OpalScript includes an AI assistant that generates and modifies scripts from natural language. Tell it "switch from AWS IAM roles to GCP" and it surgically updates the resource type check and the comment string while preserving all other logic. Scripts are version-controlled within Opal, execution history is tracked with timestamps and durations, and every automated decision flows through the same audit trail as human decisions.

The real power is the combination with Paladin. OpalScript handles the deterministic, rule-based decisions — environment gating, duration policies, custom field validation, tiered auto-approval. Paladin handles the judgment calls that require contextual investigation. Together, they cover the full spectrum from "this should obviously be approved" to "this needs someone to think carefully."

OpalQuery: Ask Your Access Graph Anything

Paladin enforces. OpalScript encodes. OpalQuery sees.

Every security team has the same recurring conversation with auditors, VPs, and incident responders: "Show me everyone with access to PCI-scoped systems." "Who on the platform team has admin access to production?" "Does this compromised user have access to anything else sensitive?" The answer is always the same: give us a few hours.

OpalQuery changes the economics of that question. It's an AI-powered query environment embedded in Opal where you describe what you're looking for in plain English and get structured results in seconds. Type "show me all users with access to Engineering Production and AdministratorAccess" and OpalQuery translates your intent into composable, editable structured filters — resolving "Engineering Production" against your actual resource catalog, not a fuzzy guess. You can inspect exactly what the AI built, adjust it, and run it. No black box.

The results are exportable as timestamped archives ready for audit evidence. Queries can be saved, shared across your organization, and re-run each audit cycle. The query you built for SOC 2 evidence last quarter is one click away when the auditor comes back.

This matters for Paladin's trajectory. As Paladin evolves toward continuous posture monitoring — surfacing risk in existing access, not just new requests — it will surface findings as pre-built OpalQuery queries. Automated risk detection connected directly to the investigative tools to act on it.

Why Now: The Agentic Inflection Point

The current state of access management is already strained. But the urgency isn't just about improving the status quo. It's about what's coming.

AI agents are beginning to request resource access autonomously — assembling permissions dynamically for each task, operating at compute speed, and generating request volumes that could reach 500 to 1000 times today's levels. An agent executing a multi-step workflow can't wait 15 minutes for an approval, let alone 24 hours. It blocks, retries, or fails.

At a mere 10x increase in request volume, even a moderately sized organization would need dozens of full-time reviewers doing nothing but approving access requests but we expect agent volumes will be an order of magnitude larger so it’s simply impossible to handle the coming tidal wave with manual reviewers. That's not a workflow problem — it's an architectural impossibility. And every completed agent task that doesn't have its access automatically revoked leaves behind a stale entitlement, compounding the 43.5% stale access rate that already exists.

The organizations that will navigate this transition are the ones that can grant fast, revoke automatically, and shift human review from individual requests to policies. That's exactly the architecture Paladin, OpalScript, and OpalQuery are designed for:

Paladin evaluates requests at machine speed with the investigative depth of a senior security engineer, approving what's safe and escalating what isn't.

OpalScript encodes your organization's specific rules as executable, auditable code — the policy layer that lets automation make decisions you trust.

OpalQuery gives every analyst instant visibility into who has access to what, so you can see the consequences of your policies and act on what you find.

Grant fast. Encode policy. Enforce continuously. See everything.

Getting Started

Paladin is available in Early Access. Configure it as a reviewer in any approval stage, and it will begin evaluating requests immediately — posting its reasoning on the activity feed and escalating when it can't confidently decide.

OpalScript is live for service user automations. Write scripts that run inside Opal with native access to your access graph, or use the AI assistant to generate them from natural language. View the documentation →

OpalQuery is available in Beta for Super and Read-Only Admins. Find it in the Opal sidebar, type what you're looking for, and export your results before the auditor finishes their coffee. View the documentation →

Want to see Paladin, OpalScript, and OpalQuery in action? Schedule a demo today.

Paladin has the context of the CISO and executes like a security engineer.

Every access reviewer is making the same impossible bet. Approve fast and risk a breach. Investigate thoroughly and become a bottleneck. Across half a million access requests on the Opal platform last year, we saw the consequences of this tradeoff play out at scale: median approval times stretching to 15 minutes, latencies exceeding 24 hours at major enterprises, and 43.5% of all granted access sitting unused for more than 90 days. Reviewers are spending hours making decisions that produce entitlements nobody uses.

Today we're making Paladin, an AI-powered access evaluation engine that eliminates this false choice, available in Early Access. It lives alongside OpalScript, a policy-as-code language, and OpalQuery, a natural-language access query environment. Together, they represent Opal's vision for what access management needs to become: a system that can see your access posture, encode your organization's policies, and enforce them autonomously.

The Problem Is Context, Not Effort

The access review bottleneck isn't laziness. It's information asymmetry. When a reviewer sees "Marcus Chen requests ADMIN access to production-db-west-2 for 48 hours," they face a wall of unanswered questions. Does Marcus normally access production databases? Is there an active incident justifying the urgency? Does a 48-hour window violate the PCI duration policy on this resource? Has his team historically needed this kind of access?

Finding those answers means toggling between Okta, PagerDuty, Opal's access logs, a data classification spreadsheet, and whatever Slack channel the on-call team uses. The cost of doing that investigation for every request is prohibitive. So reviewers take shortcuts. They approve based on gut feel, they rubber-stamp because the requester is a familiar name, or they slow-walk everything into a multi-day queue because they can't distinguish the urgent from the routine.

Our data spells out this story. At one end of the spectrum are organizations where 90% of requests clear in under 5 minutes. At the other end are organizations where 10% of requests take more than 92 hours. The gap isn't capability. It's whether the reviewer has sufficient context to decide.

An AI Reviewer or an AI Advisor: You Decide

Paladin was designed to serve a fully-capable reviewer entity in Opal's approval chain — a service user that can be assigned to any approval stage, just like a human reviewer. When a request arrives, Paladin investigates it the way a senior security engineer would, then acts on what it finds. On the other hand, if you’d prefer a more arm’s length approach, you can choose to use Paladin in a familiar context: a sidebar in Risk Center.

Here's what that looks like in practice:

Expanded:

The entire exchange — investigation, escalation, re-evaluation, approval — happens through the standard request activity feed. Every piece of reasoning is captured in the audit trail. No new infrastructure required.

What Paladin Evaluates

In its current V1 implementation, Paladin assesses:

Justification quality. Is the stated reason substantive, or is it "I need it"? Vague justifications are the single most common trigger for escalation.

Access history. Has the requester accessed this resource before? Have they made prior requests? First-time requests to sensitive resources get higher scrutiny.

Ticket correlation. If the justification references a ticket — Linear, Jira, or others — Paladin looks it up. It verifies the ticket exists, is active, and matches the requested resource. A valid ticket dramatically increases confidence.

Resource sensitivity inference. Paladin infers sensitivity from resource naming patterns and metadata. A resource called "prod-payments-db" triggers different scrutiny than "dev-sandbox-test."

Identity context. Paladin resolves the requester's role and seniority. An intern requesting admin access to a production database is a different risk profile than a senior platform engineer.

When Paladin has sufficient confidence, it approves directly. When it doesn't, it escalates with a clear explanation, not a vague "needs review" flag. Paladin explicitly delivers a specific accounting of what's missing and what will resolve it.

The Long-Term Vision

The Paladin roadmap extends into structured multi-signal evaluation with explicit risk scores, peer analysis that compares requests against team norms, usage pattern analysis that checks whether the requester's behavior matches their justification, policy engine integration that catches duration violations and escalation requirements automatically, and eventually, continuous posture monitoring that extends beyond new requests to proactively surface risk in existing access — orphaned accounts, privilege drift, standing access that hasn't been used in months.

OpalScript: Your Policies, Executable

Paladin handles the AI-driven evaluation. But many access decisions don't need AI — they need your organization's specific rules, consistently applied. That's OpalScript.

OpalScript is a scripting language built on Starlark — the same deterministic, sandboxed language Google developed for Bazel — that lets you encode conditional access logic directly inside Opal. No external webhooks, no infrastructure to deploy, no network calls to maintain.

request = context.get_request() for resource in request.requested_resources: if resource.resource_type == "AWS_IAM_ROLE": if "prod" in resource.resource_name.lower(): actions.comment("Production AWS access requires manual review") break else: actions.approve("Auto-approved: non-production access")

That script runs every time an access request is routed to the service user it's attached to. It checks whether any requested resource is a production AWS IAM role, flags those for human review, and auto-approves everything else. No webhook endpoint, no Lambda function, no deployment pipeline.

OpalScript includes an AI assistant that generates and modifies scripts from natural language. Tell it "switch from AWS IAM roles to GCP" and it surgically updates the resource type check and the comment string while preserving all other logic. Scripts are version-controlled within Opal, execution history is tracked with timestamps and durations, and every automated decision flows through the same audit trail as human decisions.

The real power is the combination with Paladin. OpalScript handles the deterministic, rule-based decisions — environment gating, duration policies, custom field validation, tiered auto-approval. Paladin handles the judgment calls that require contextual investigation. Together, they cover the full spectrum from "this should obviously be approved" to "this needs someone to think carefully."

OpalQuery: Ask Your Access Graph Anything

Paladin enforces. OpalScript encodes. OpalQuery sees.

Every security team has the same recurring conversation with auditors, VPs, and incident responders: "Show me everyone with access to PCI-scoped systems." "Who on the platform team has admin access to production?" "Does this compromised user have access to anything else sensitive?" The answer is always the same: give us a few hours.

OpalQuery changes the economics of that question. It's an AI-powered query environment embedded in Opal where you describe what you're looking for in plain English and get structured results in seconds. Type "show me all users with access to Engineering Production and AdministratorAccess" and OpalQuery translates your intent into composable, editable structured filters — resolving "Engineering Production" against your actual resource catalog, not a fuzzy guess. You can inspect exactly what the AI built, adjust it, and run it. No black box.

The results are exportable as timestamped archives ready for audit evidence. Queries can be saved, shared across your organization, and re-run each audit cycle. The query you built for SOC 2 evidence last quarter is one click away when the auditor comes back.

This matters for Paladin's trajectory. As Paladin evolves toward continuous posture monitoring — surfacing risk in existing access, not just new requests — it will surface findings as pre-built OpalQuery queries. Automated risk detection connected directly to the investigative tools to act on it.

Why Now: The Agentic Inflection Point

The current state of access management is already strained. But the urgency isn't just about improving the status quo. It's about what's coming.

AI agents are beginning to request resource access autonomously — assembling permissions dynamically for each task, operating at compute speed, and generating request volumes that could reach 500 to 1000 times today's levels. An agent executing a multi-step workflow can't wait 15 minutes for an approval, let alone 24 hours. It blocks, retries, or fails.

At a mere 10x increase in request volume, even a moderately sized organization would need dozens of full-time reviewers doing nothing but approving access requests but we expect agent volumes will be an order of magnitude larger so it’s simply impossible to handle the coming tidal wave with manual reviewers. That's not a workflow problem — it's an architectural impossibility. And every completed agent task that doesn't have its access automatically revoked leaves behind a stale entitlement, compounding the 43.5% stale access rate that already exists.

The organizations that will navigate this transition are the ones that can grant fast, revoke automatically, and shift human review from individual requests to policies. That's exactly the architecture Paladin, OpalScript, and OpalQuery are designed for:

Paladin evaluates requests at machine speed with the investigative depth of a senior security engineer, approving what's safe and escalating what isn't.

OpalScript encodes your organization's specific rules as executable, auditable code — the policy layer that lets automation make decisions you trust.

OpalQuery gives every analyst instant visibility into who has access to what, so you can see the consequences of your policies and act on what you find.

Grant fast. Encode policy. Enforce continuously. See everything.

Getting Started

Paladin is available in Early Access. Configure it as a reviewer in any approval stage, and it will begin evaluating requests immediately — posting its reasoning on the activity feed and escalating when it can't confidently decide.

OpalScript is live for service user automations. Write scripts that run inside Opal with native access to your access graph, or use the AI assistant to generate them from natural language. View the documentation →

OpalQuery is available in Beta for Super and Read-Only Admins. Find it in the Opal sidebar, type what you're looking for, and export your results before the auditor finishes their coffee. View the documentation →

Want to see Paladin, OpalScript, and OpalQuery in action? Schedule a demo today.

Paladin has the context of the CISO and executes like a security engineer.

Every access reviewer is making the same impossible bet. Approve fast and risk a breach. Investigate thoroughly and become a bottleneck. Across half a million access requests on the Opal platform last year, we saw the consequences of this tradeoff play out at scale: median approval times stretching to 15 minutes, latencies exceeding 24 hours at major enterprises, and 43.5% of all granted access sitting unused for more than 90 days. Reviewers are spending hours making decisions that produce entitlements nobody uses.

Today we're making Paladin, an AI-powered access evaluation engine that eliminates this false choice, available in Early Access. It lives alongside OpalScript, a policy-as-code language, and OpalQuery, a natural-language access query environment. Together, they represent Opal's vision for what access management needs to become: a system that can see your access posture, encode your organization's policies, and enforce them autonomously.

The Problem Is Context, Not Effort

The access review bottleneck isn't laziness. It's information asymmetry. When a reviewer sees "Marcus Chen requests ADMIN access to production-db-west-2 for 48 hours," they face a wall of unanswered questions. Does Marcus normally access production databases? Is there an active incident justifying the urgency? Does a 48-hour window violate the PCI duration policy on this resource? Has his team historically needed this kind of access?

Finding those answers means toggling between Okta, PagerDuty, Opal's access logs, a data classification spreadsheet, and whatever Slack channel the on-call team uses. The cost of doing that investigation for every request is prohibitive. So reviewers take shortcuts. They approve based on gut feel, they rubber-stamp because the requester is a familiar name, or they slow-walk everything into a multi-day queue because they can't distinguish the urgent from the routine.

Our data spells out this story. At one end of the spectrum are organizations where 90% of requests clear in under 5 minutes. At the other end are organizations where 10% of requests take more than 92 hours. The gap isn't capability. It's whether the reviewer has sufficient context to decide.

An AI Reviewer or an AI Advisor: You Decide

Paladin was designed to serve a fully-capable reviewer entity in Opal's approval chain — a service user that can be assigned to any approval stage, just like a human reviewer. When a request arrives, Paladin investigates it the way a senior security engineer would, then acts on what it finds. On the other hand, if you’d prefer a more arm’s length approach, you can choose to use Paladin in a familiar context: a sidebar in Risk Center.

Here's what that looks like in practice:

Expanded:

The entire exchange — investigation, escalation, re-evaluation, approval — happens through the standard request activity feed. Every piece of reasoning is captured in the audit trail. No new infrastructure required.

What Paladin Evaluates

In its current V1 implementation, Paladin assesses:

Justification quality. Is the stated reason substantive, or is it "I need it"? Vague justifications are the single most common trigger for escalation.

Access history. Has the requester accessed this resource before? Have they made prior requests? First-time requests to sensitive resources get higher scrutiny.

Ticket correlation. If the justification references a ticket — Linear, Jira, or others — Paladin looks it up. It verifies the ticket exists, is active, and matches the requested resource. A valid ticket dramatically increases confidence.

Resource sensitivity inference. Paladin infers sensitivity from resource naming patterns and metadata. A resource called "prod-payments-db" triggers different scrutiny than "dev-sandbox-test."

Identity context. Paladin resolves the requester's role and seniority. An intern requesting admin access to a production database is a different risk profile than a senior platform engineer.

When Paladin has sufficient confidence, it approves directly. When it doesn't, it escalates with a clear explanation, not a vague "needs review" flag. Paladin explicitly delivers a specific accounting of what's missing and what will resolve it.

The Long-Term Vision

The Paladin roadmap extends into structured multi-signal evaluation with explicit risk scores, peer analysis that compares requests against team norms, usage pattern analysis that checks whether the requester's behavior matches their justification, policy engine integration that catches duration violations and escalation requirements automatically, and eventually, continuous posture monitoring that extends beyond new requests to proactively surface risk in existing access — orphaned accounts, privilege drift, standing access that hasn't been used in months.

OpalScript: Your Policies, Executable

Paladin handles the AI-driven evaluation. But many access decisions don't need AI — they need your organization's specific rules, consistently applied. That's OpalScript.

OpalScript is a scripting language built on Starlark — the same deterministic, sandboxed language Google developed for Bazel — that lets you encode conditional access logic directly inside Opal. No external webhooks, no infrastructure to deploy, no network calls to maintain.

request = context.get_request() for resource in request.requested_resources: if resource.resource_type == "AWS_IAM_ROLE": if "prod" in resource.resource_name.lower(): actions.comment("Production AWS access requires manual review") break else: actions.approve("Auto-approved: non-production access")

That script runs every time an access request is routed to the service user it's attached to. It checks whether any requested resource is a production AWS IAM role, flags those for human review, and auto-approves everything else. No webhook endpoint, no Lambda function, no deployment pipeline.

OpalScript includes an AI assistant that generates and modifies scripts from natural language. Tell it "switch from AWS IAM roles to GCP" and it surgically updates the resource type check and the comment string while preserving all other logic. Scripts are version-controlled within Opal, execution history is tracked with timestamps and durations, and every automated decision flows through the same audit trail as human decisions.

The real power is the combination with Paladin. OpalScript handles the deterministic, rule-based decisions — environment gating, duration policies, custom field validation, tiered auto-approval. Paladin handles the judgment calls that require contextual investigation. Together, they cover the full spectrum from "this should obviously be approved" to "this needs someone to think carefully."

OpalQuery: Ask Your Access Graph Anything

Paladin enforces. OpalScript encodes. OpalQuery sees.

Every security team has the same recurring conversation with auditors, VPs, and incident responders: "Show me everyone with access to PCI-scoped systems." "Who on the platform team has admin access to production?" "Does this compromised user have access to anything else sensitive?" The answer is always the same: give us a few hours.

OpalQuery changes the economics of that question. It's an AI-powered query environment embedded in Opal where you describe what you're looking for in plain English and get structured results in seconds. Type "show me all users with access to Engineering Production and AdministratorAccess" and OpalQuery translates your intent into composable, editable structured filters — resolving "Engineering Production" against your actual resource catalog, not a fuzzy guess. You can inspect exactly what the AI built, adjust it, and run it. No black box.

The results are exportable as timestamped archives ready for audit evidence. Queries can be saved, shared across your organization, and re-run each audit cycle. The query you built for SOC 2 evidence last quarter is one click away when the auditor comes back.

This matters for Paladin's trajectory. As Paladin evolves toward continuous posture monitoring — surfacing risk in existing access, not just new requests — it will surface findings as pre-built OpalQuery queries. Automated risk detection connected directly to the investigative tools to act on it.

Why Now: The Agentic Inflection Point

The current state of access management is already strained. But the urgency isn't just about improving the status quo. It's about what's coming.

AI agents are beginning to request resource access autonomously — assembling permissions dynamically for each task, operating at compute speed, and generating request volumes that could reach 500 to 1000 times today's levels. An agent executing a multi-step workflow can't wait 15 minutes for an approval, let alone 24 hours. It blocks, retries, or fails.

At a mere 10x increase in request volume, even a moderately sized organization would need dozens of full-time reviewers doing nothing but approving access requests but we expect agent volumes will be an order of magnitude larger so it’s simply impossible to handle the coming tidal wave with manual reviewers. That's not a workflow problem — it's an architectural impossibility. And every completed agent task that doesn't have its access automatically revoked leaves behind a stale entitlement, compounding the 43.5% stale access rate that already exists.

The organizations that will navigate this transition are the ones that can grant fast, revoke automatically, and shift human review from individual requests to policies. That's exactly the architecture Paladin, OpalScript, and OpalQuery are designed for:

Paladin evaluates requests at machine speed with the investigative depth of a senior security engineer, approving what's safe and escalating what isn't.

OpalScript encodes your organization's specific rules as executable, auditable code — the policy layer that lets automation make decisions you trust.

OpalQuery gives every analyst instant visibility into who has access to what, so you can see the consequences of your policies and act on what you find.

Grant fast. Encode policy. Enforce continuously. See everything.

Getting Started

Paladin is available in Early Access. Configure it as a reviewer in any approval stage, and it will begin evaluating requests immediately — posting its reasoning on the activity feed and escalating when it can't confidently decide.

OpalScript is live for service user automations. Write scripts that run inside Opal with native access to your access graph, or use the AI assistant to generate them from natural language. View the documentation →

OpalQuery is available in Beta for Super and Read-Only Admins. Find it in the Opal sidebar, type what you're looking for, and export your results before the auditor finishes their coffee. View the documentation →

Want to see Paladin, OpalScript, and OpalQuery in action? Schedule a demo today.

Recommended posts

Find out why the best security teams manage access with Opal

Find out why the best security teams manage access with Opal

Find out why the best security teams manage access with Opal

Stop Reviewing.

Start Enforcing.

Stop Reviewing.

Start Enforcing.

Stop Reviewing.

Start Enforcing.

Stop Reviewing.

Start Enforcing.